A quantum backend for the human brain

Q50 proposes a next-generation brain–computer interface that connects high-density neural recordings to quantum computing systems — enabling signal decoding and cognitive augmentation fundamentally out of reach for classical architectures.

The front end is a Neuralink-style cortical implant. The backend is a quantum processor. The gap between them — filtering, encoding, and interpreting spike trains and local field potentials — is where Q50 operates.

As electrode density scales toward whole-cortex sampling, classical decoders face combinatorial complexity that grows exponentially. Quantum machine learning — variational circuits, kernel methods, amplitude encoding — offers a path through that wall.

| Started | Cortical Grid | Sampling (Hz) | Ticks | Active Nodes | |

|---|---|---|---|---|---|

| 2024-03-15 12:00RUNNING | 256 × 256 | 100 | 482,300 | 1,847 / 94,210 | Analyzer |

| 2024-02-10 09:00 | 128 × 128 | 50 | 1,200,000 | 0 / 58,341 | Analyzer |

| 2024-01-01 00:00 | 64 × 64 × 64 | 200 | 650,000 | 0 / 22,100 | Analyzer |

Live Neural Simulation

Why Q50?

Quantum-Enhanced Neural Interfaces: A Technical Overview

Neural data acquisition

The foundation of this framework lies in the evolution of Neuralink-style brain–computer interfaces as ultra–high bandwidth neural data acquisition systems. These systems rely on dense arrays of biocompatible electrodes implanted within cortical regions, capable of recording large-scale neural activity with fine temporal resolution. Each electrode site captures the summed extracellular potential of nearby neurons, yielding a continuous stream of raw voltage traces that must be digitized, filtered, and interpreted in real time.

The resulting data consists of complex, high-frequency spike trains and local field potentials, representing the aggregate dynamics of neuronal populations engaged in perception, motor control, and cognition. Individual spikes are isolated through spike-sorting algorithms that classify waveform shapes into putative single units — a process that becomes increasingly error-prone as electrode counts grow and firing rates increase. Despite the increasing fidelity of these recordings, a fundamental bottleneck persists: accurately decoding intent, reconstructing internal states, and extracting meaningful structure from signals that are inherently noisy, nonlinear, and distributed across vast neural networks.

The classical decoding ceiling

Current Neuralink-style systems primarily depend on classical machine learning models — deep neural networks and probabilistic decoders — to map neural activity to outputs such as cursor movement or text generation. Recurrent architectures such as LSTMs and transformer-based decoders have shown promise for capturing temporal dependencies in spike trains, but they remain fundamentally constrained by the hardware they run on. While effective at modest electrode counts, these approaches face scalability constraints when dealing with the combinatorial complexity of brain-wide activity patterns.

The dimensionality of the signal space grows exponentially with the number of recorded neurons. As electrode density increases and interfaces approach whole-cortex sampling, the problem of decoding transitions from difficult to fundamentally intractable under conventional computational frameworks. Even with aggressive dimensionality reduction techniques — principal component analysis, manifold learning, tensor decompositions — the underlying complexity cannot be fully collapsed without information loss that degrades decoding accuracy.

As interfaces approach whole-cortex sampling, the problem of decoding transitions from difficult to fundamentally intractable under conventional computational frameworks.

Quantum computing as co-processor

Rather than interfacing directly with biological tissue, quantum processors would operate as specialized co-processors within the broader neural data pipeline. By leveraging superposition and entanglement, quantum systems can represent and manipulate high-dimensional probability distributions in ways that are inefficient or inaccessible to classical architectures. A quantum register of n qubits can simultaneously represent 2ⁿ states — a property that makes it naturally suited to encoding the large joint probability distributions that characterize correlated neural population activity.

Quantum machine learning techniques offer potential pathways for modeling the latent structure of neural activity at scales that exceed classical limits. Critically, this does not require fault-tolerant universal quantum computers; near-term noisy intermediate-scale quantum (NISQ) devices, combined with hybrid classical–quantum optimization loops, are already capable of demonstrating advantage in specific high-dimensional classification and regression tasks relevant to neural decoding.

- Variational quantum circuits

- Parameterized quantum gates optimized via classical outer-loop algorithms to approximate target functions — used here for neural state classification and intent decoding.

- Quantum kernel methods

- Implicitly compute inner products in exponentially large feature spaces via quantum interference, enabling SVM-class models over neural manifolds inaccessible classically.

- Amplitude encoding

- Neural feature vectors encoded into probability amplitudes of a quantum state — n features require only log₂(n) qubits, enabling compact representation of high-dimensional signals.

- Quantum entanglement

- Correlations between qubits that have no classical analogue, exploited here to capture long-range dependencies between spatially distributed cortical recording sites.

The proposed pipeline

Neural data captured by the implant undergoes initial preprocessing through classical pipelines — filtering, spike sorting, and feature extraction — before being transformed into representations compatible with quantum algorithms. Bandpass filtering separates high-frequency multiunit activity from low-frequency local field potentials; spike detection thresholds isolate individual action potentials; and population firing rate vectors are computed over sliding time windows to produce the feature tensors that serve as input to the quantum encoding stage.

These representations are embedded into quantum states via amplitude encoding or basis encoding schemes, enabling exploration of complex neural manifolds and identification of subtle correlations across distributed brain regions. Quantum-enhanced optimization routines — running on co-located QPU hardware or accessed via cloud quantum backends — then refine decoding model parameters in real time, continuously improving the accuracy and latency of brain–computer interaction.

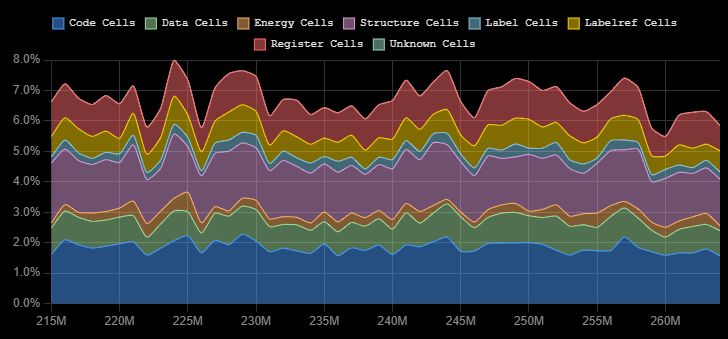

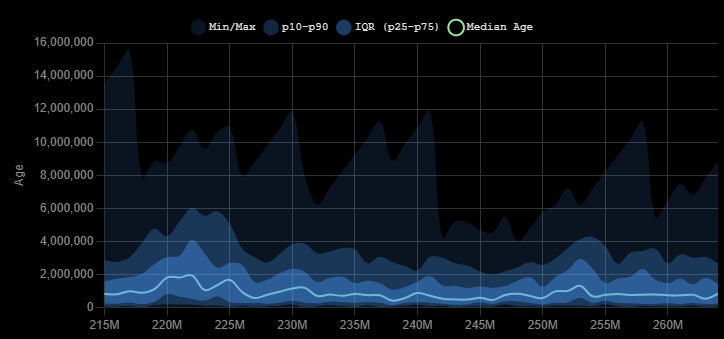

Temporal dynamics and lifespan distributions

Neural signals exhibit characteristic temporal structures — burst patterns, oscillatory cycles, and slow drift — that encode context, intention, and state. Theta oscillations (4–8 Hz) correlate with spatial navigation and memory encoding; gamma bursts (30–100 Hz) accompany focused attention and sensory binding; and slow cortical potentials reflect sustained cognitive states over seconds to minutes. Any effective decoding system must be sensitive to all of these timescales simultaneously.

The statistical distribution of signal lifespans is essential for accurate decoding and for calibrating quantum circuit depth and error-correction overhead. Circuits that are too shallow fail to capture long-range temporal dependencies; circuits that are too deep accumulate gate errors that overwhelm the quantum signal. Understanding the characteristic timescales present in a given neural dataset directly informs the optimal circuit architecture.

Augmentation without alteration

The “quantum-enhanced” nature of the system does not imply that the brain itself operates quantum mechanically — a claim that remains scientifically contentious and is not required by this framework. Rather, quantum computational frameworks are used to extend the interpretive and analytical capabilities applied to neural data — a layered neurocomputational system in which biological cognition is augmented not by altering its physical substrate, but by dramatically enhancing the computational processes that interpret and interact with it. The neurons remain biological; the inference engine that reads them becomes quantum.

Biological cognition is augmented not by altering its physical substrate, but by dramatically enhancing the computational processes that interpret and interact with it.

Conclusions

Viewed in its entirety, this concept represents a shift from viewing Neuralink purely as an interface device to understanding it as the front end of a much larger computational ecosystem. Within this ecosystem, quantum computing serves as a transformative backend capable of addressing the extreme complexity of brain activity — potentially enabling richer forms of communication, more precise neural control, and new modalities of human–machine integration that remain beyond the reach of current technologies. The trajectory from single-electrode recordings to thousand-channel cortical arrays has already demonstrated that scale unlocks capability; extending that trajectory into the quantum domain may represent the next fundamental threshold.

Significant engineering and theoretical challenges remain — quantum error correction, latency constraints imposed by real-time neural decoding, and the practical integration of cryogenic quantum hardware with implanted biological systems. But the conceptual case is compelling: the brain generates more information than classical computers can efficiently decode at scale, and quantum systems are uniquely positioned to close that gap.

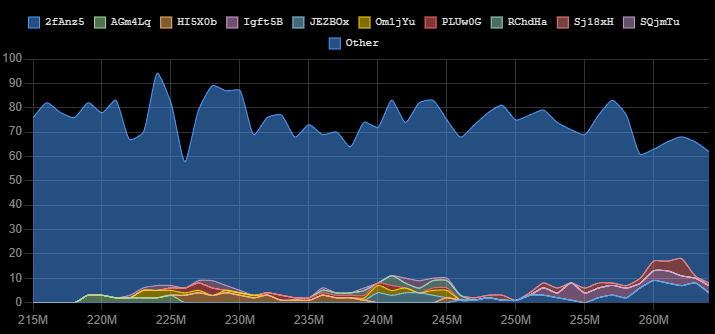

Q50 provides a simulation environment for exploring the population-level dynamics, signal diversity, and temporal structures that such a system would need to decode — a testbed for the computational assumptions that any future quantum neural interface would depend upon.

Explore the Architecture

This demo simulates a Q50 neural session — cortical spike trains preprocessed through a classical pipeline, encoded into quantum-compatible representations, and decoded via variational circuits. Use the full Analyzer to observe population dynamics, clade evolution, and entropy in real time.